Regulation Becomes a Tool

AI Lobby Is Already Winning

Regulatory capture is one of the oldest ways companies stop competition while pretending they are protecting the public. It is when an industry helps create the rules that govern that industry, then uses those rules to build a moat around itself. The public hears “safety.” The politicians hear “responsibility.” The company hears “no more competition.”

And we are watching it happen right now in real time.

We are seeing it in cryptocurrency. We are seeing it in AI. And after watching the All In podcast the other day, where David Sacks (Trump’s A.I. Czar) basically screamed for everyone to pay attention, I think this needs to be said plainly. Because the trick here is not subtle once you understand the playbook.

First, you amplify the problem.

Then you present yourself as the responsible actor.

Then you fund the politics around the problem.

Then you help write the rules.

Then the rules just so happen to favor the companies already big enough to comply.

That is regulatory capture.

And right now, Anthropic is giving us a case study.

Step One: Create the Fear, Then Offer the Cure

For AI companies to move into Washington and ask for regulation, there has to be a problem. And to be clear, there are real problems. People are worried about AI for legitimate reasons. They are worried about jobs, water use, electricity use, data centers, farmland, privacy, deepfakes, national security, and whether these companies are building something society cannot control.

Those concerns are not fake. People are right to be concerned.

But this is where the manipulation starts. A company can take real public concern, amplify it, wrap itself in responsibility, and then show up in Washington saying, “Please regulate us before something terrible happens.”

That sounds altruistic. It sounds like an industry is finally taking responsibility.

But…

Dario Amodei, the CEO of Anthropic, has become one of the loudest AI doom voices in the industry. He has warned about massive white-collar job losses. He has talked about unemployment spikes. He has warned about AI writing most code. He has raised concerns about civilization-level threats, biological weapons, autonomous weapons, deception, blackmail, and systems that may operate beyond human control.

When the CEO of one of the biggest AI companies spends a lot of time warning the public that AI could be dangerous, and then that same ecosystem starts moving money into politics to shape AI regulation, you should at least stop and ask what the hell is going on.

Because the message is very convenient.

AI is dangerous.

We are the responsible ones.

The government must act.

We are here to help write the solution.

That is the first step.

Step Two: Build the Political Machine

Once the fear is established, the next step is political money.

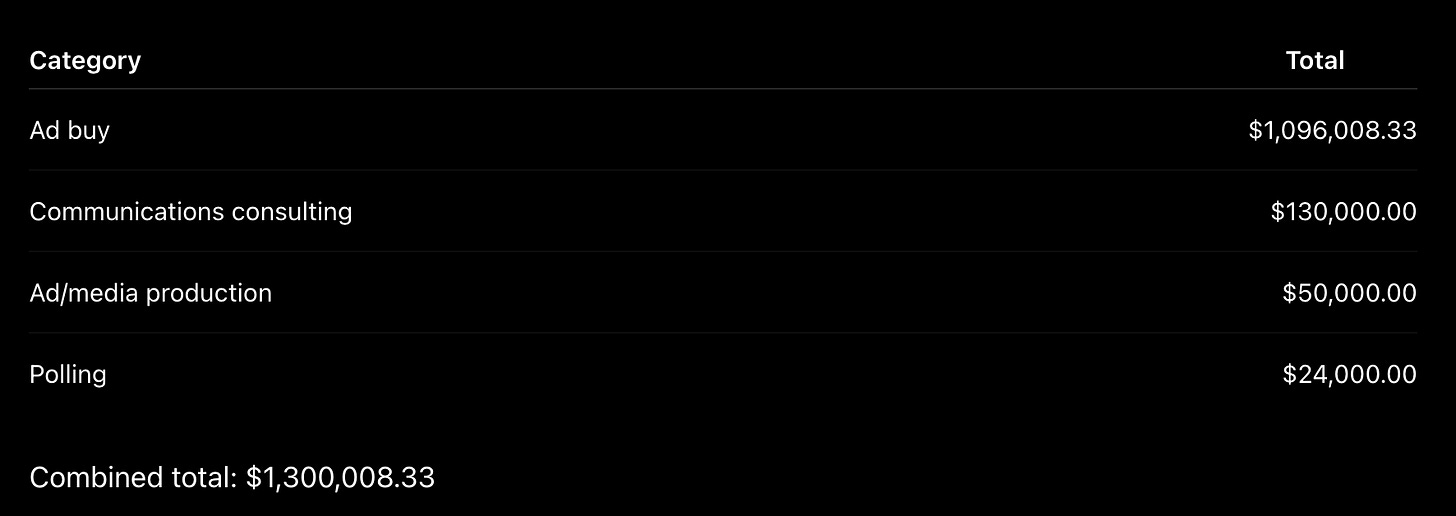

Enter the Jobs and Democracy PAC, funded by Anthropic. According to the information I am working from, Anthropic put $20 million into this PAC, and the PAC recently spent almost $500,000 on TV ads supporting Brian Poindexter in his congressional primary. But a total of $1.3 million in total spend from Jan 1, 2026 (as far as we know).

Now, let’s talk about what a Super PAC does.

Anthropic knows exactly what a Super PAC is. They know it can spend unlimited money promoting candidates. They know this is considered political speech. They also know Super PACs cannot legally coordinate with campaigns.

And that is not just a bug in the system. That is also a very convenient feature.

Because it gives everyone plausible deniability.

The campaign can say, “We cannot control what outside groups do.” The candidate can say, “We are not coordinating with them.” The PAC can say it is independent. The company can say it is supporting “responsible regulation.” And the voters are supposed to pretend all of these walls are made of steel instead of paper.

The second layer is even more important. This is not just any corporate-funded PAC. This is a PAC that can present itself as being for democracy, jobs, and responsible AI regulation. So when people raise concerns about corporate money, the response becomes, “Well, isn’t this good? Don’t we want AI regulated? Don’t we want responsible technology?”

And that is exactly the trap.

Because the regulation being demanded is already being framed by the same industry that benefits from it.

Step Three: Write the Rules Before Anyone Else Understands the Game

Most members of Congress do not understand AI. They do not understand crypto either. They do not understand the technical systems, the market dynamics, the open-source implications, the infrastructure requirements, or the long-term competitive consequences.

That is not even necessarily an insult. These are massive, complicated fields, and Congress deals with everything from agriculture to defense to health care to tax law to foreign policy. Nobody knows everything.

So what happens?

Lobbyists show up.

“Experts” show up.

PAC-funded influence networks show up.

Industry-backed nonprofits show up.

Lobbyists buy million-dollar townhouses in Washington, host events, build relationships, circulate white papers, draft model legislation, and slowly become the people lawmakers rely on to understand the issue.

Then when the time comes to regulate AI, who already has the framework written?

The companies! (lobbyists)

Some lawmakers will listen to Anthropic. Some will listen to OpenAI. Some will listen to Google. Some will listen to xAI. Some will listen to whoever has the best relationships, the best lobbyists, the best events, or the most money moving around the political system.

And then the rules start to take shape.

On the surface, they will sound reasonable. They always do. Testing requirements. Licensing. Know-your-customer systems. Safety reviews. Registration. Reporting. Compliance departments. Liability rules. Security audits.

Again, I am not saying every one of those ideas is bad.

But ask who can afford them.

Who can hire the lawyers? Who has the congressional friends? Who can build the compliance teams? Who can survive years of regulatory process? Who can meet complicated federal requirements? Who can lobby for technical language that looks neutral but just happens to crush open-source competitors and smaller startups?

The biggest companies can.

That is the moat.

The Rockefeller Lesson

This is why the David Sacks analogy on All In was so useful. He laid out a thought experiment about John D. Rockefeller and Standard Oil. Rockefeller was one of the most ruthless monopolists in American history, but Sacks makes the point that Rockefeller was terrible at public relations.

So imagine if he had been better at it.

Imagine if Rockefeller had not called his company Standard Oil. Imagine he called it Safe Oil. Imagine he went around warning everyone that kerosene was dangerous. Which, of course, it was. It could light your house, burn it down, torch a city, or be used to make a bomb.

Then imagine Rockefeller calling for a government agency to regulate kerosene safety. Testing. Licensing. Common-sense standards. Rules for wick thickness. Rules against dangerous independent refiners. A whole debate over what makes oil safe.

People would have gotten lost in the debate over safety while missing the real move. Rockefeller would have been building the richest, most powerful monopoly in history under the moral cover of protecting the public.

That is the point.

A company can be right about danger and still be using that danger to eliminate competition.

That is what people need to understand.

Competition Is the Threat They Actually Fear

Companies do not like competition. Big companies especially do not like open-source competition. Digital companies do not like open ecosystems when closed ecosystems make more money. They want users trapped inside their products, their models, their platforms, their APIs, their pricing structures, and their rules.

Open-source AI is a threat to that. Smaller startups are a threat to that. Independent developers are a threat to that. Competitive markets are a threat to that.

So if you are a massive AI company, what is the best way to stop them?

You do not say, “Please ban our competitors because we would like to become a monopoly.” That would be too obvious.

You say, “This technology is dangerous, and we need serious safety rules.”

Then you help define the rules.

Then those rules require compliance systems that only a few companies can afford.

Then the small companies die. The open-source models get squeezed. The independents get buried under red tape. And the largest companies carve up the industry as they see fit.

That is regulatory capture.

And that is why David Sacks, (remember, he is the Czar of AI & Crypto for the Trump administration) was warning that if Anthropic continues on its current trajectory, it could become one of the most powerful monopolies ever created. He also pointed directly at anti-competitive behavior.

The Political Trick

This is why I keep coming back to the money in these races.

A lot of people hear that a corporate-backed PAC supports AI regulation and think, “Well, that sounds good.” They hear that the PAC cannot coordinate with the candidate and think, “Well, then it is separate.” They hear that the company is worried about AI safety and think, “Well, maybe they are being responsible.”

But that is how the whole structure works.

The company amplifies the fear.

The company funds the political vehicle.

The political vehicle supports candidates.

The candidates can deny coordination.

The PAC can claim moral purpose.

The public gets told the whole thing is about safety.

Then the industry helps write the regulations that determine who survives.

That is not fucking benign. That is not some innocent little civic engagement project. That is a complete fucking manipulation.

And yes, people will say, “But we need regulation.”

Fine. I agree.

But regulation written by whom? For whose benefit? With whose money? With whose lobbyists in the room? With which competitors excluded? With which open-source projects crushed? With which candidates supported along the way?

Those are the questions.

This Is How Power Works

The entire point of this is not that AI should be unregulated. The point is that we need to understand how regulation can be used as a weapon.

If we are not careful, we are going to wake up in a world where a handful of AI companies wrote the rules for everyone else, crushed open competition, trapped users inside closed systems, and then told us they did it all for our own safety.

Create the problem, or amplify it.

Present yourself as the responsible adult.

Fund the politics.

Write the rules.

Kill the competition.

Call it safety.

That is why this matters. And that is why we cannot just shrug and say, “Well, they support regulation, so it must be fine.”

Because sometimes the most dangerous power grab does not come from the company saying regulation is bad.

Sometimes it comes from the company saying, “Please regulate us.”